On the tip of my tongue

Say these words out loud.

‘The tip of the tongue, the lips and the teeth.’

Whilst you were speaking, what were your tongue and lips doing? How were you breathing? Can you breathe in and still speak?

Now try reciting this (rather peculiar) poem. It contains every sound (phoneme) used in spoken English.

The pleasure of Shawn’s company

Is what I most enjoy.

He put a tack on Ms. Yancey’s chair

When she called him a horrible boy.

At the end of the month he was flinging two kittens

Across the width of the room.

I count on his schemes to show me a way now

Of getting away from my gloom.

This ‘panphonic’ poem was written by linguist Neal Whitman, and used in the film Mission: Impossible 3 (2006).

Did you notice that whilst you were talking, your tongue and lips never moved sideways?

Reaching out across a divide; Israeli Prime Minister Yitzhak Rabin, U.S. president Bill Clinton, and PLO chairman Yasser Arafat at the White house in 1993 (Image: Wikimedia Commons)

Talking connects us across all cultural boundaries, and sets us apart from other animals. Our diverse vocal repertoire, contributing to nearly 7,000 languages worldwide, has no equivalent anywhere in the animal kingdom. We arrange complex sounds into phrases with rhythm, stress and intonation (prosody), and deliver them with visual emphasis using facial expressions and gestures.

Traditionally, linguists have considered that our speech is too complex to have arisen by natural selection, suggesting instead that it results from a sudden event such as a ‘freak’ genetic mutation.

This view is at odds with what we understand about the rest of our biology. Evolution works by selecting from existing variation in forms and behaviours. Adaptations are not a ‘best-design’ solution to a survival problem, but a balance of innovations that arrive with inherited constraints.

During our first seven years of life we learn to articulate the sounds unique to the language(s) we hear. We structure these sounds into syllables, and use them to build words, phrases and sentences that convey meaning. We also use these sounds to coin new words and invent new meanings for these sounds. For our ancestors to begin to do this, however, requires that the physical ability to make this diversity of sounds already to have been in place.

How do we physically produce speech sounds?

![X-rays of a human jaw, taken by Dr H. Trevelyan George at St. Bartholomew's Hospital, London, in January, 1917. The black dashed line in these x-rays is a thin metal chain on the tongue’s upper surface. The pictures reveal how it changes position when producing the ‘cardinal’ vowels [i, u, a, ɑ]. These sounds are voiced by controlling the flow of breath and causing vibrations in the vocal cords. English consonants are voiced or voiceless, and are made using five main movements. We touch the lips together or against the teeth, place the tongue between or onto the back of the teeth, or against the hard palate, and lift the tongue against the soft palate. We also use an open-mouthed turbulent flow of air to make aspirated sounds as in the ‘h’ of ‘hair’ (Image: Wikimedia Commons)](http://www.42evolution.org/wp-content/uploads/2014/09/E1-400x392.jpg)

X-rays of a human jaw, taken by Dr H. Trevelyan George at St. Bartholomew’s Hospital, London, in January, 1917. The black dashed line in these x-rays is a thin metal chain on the tongue’s upper surface. The pict... moreures reveal how it changes position when producing the ‘cardinal’ vowels [i, u, a, ɑ]. These sounds are voiced by controlling the flow of breath and causing vibrations in the vocal cords. English consonants are voiced or voiceless, and are made using five main movements. We touch the lips together or against the teeth, place the tongue between or onto the back of the teeth, or against the hard palate, and lift the tongue against the soft palate. We also use an open-mouthed turbulent flow of air to make aspirated sounds as in the ‘h’ of ‘hair’ (Image: Wikimedia Commons)

Sound generation (phonation) in almost all mammals involves coordinating their breathing with the control of tension in the vocal folds of the larynx. We selectively process the harmonics from these basic sounds, and articulate precise sound sequences using rapid and rhythmic movements of the tongue, lips and associated structures.

At the simplest level, speaking involves alternating open-closed movements of the jaw at the same time as generating sound in the larynx. This produces an alternating stream of open (resonant) and closed (muted) sounds. We build words and phrases from these alternating ‘segments’, using the lips and tongue to produce precisely articulated consonants and control pitch, timbre, tone and stress.

We vocalise as other mammals do, using our feeding and breathing apparatus. The muscle movements that operate both chewing and speaking are controlled by rhythmic nerve impulses from ‘Central Pattern Generators’. These are autonomous nerve ‘modules’ in the lower brain and spinal cord. They co-ordinate all our repetitive movements, from walking to vomiting.

Which aspects of our speaking abilities are found in other animals?

Jack, a military working dog, barking during his training; Rochester, New York, 2009. Dogs are unusual amongst vocal mammals; their calls include barking sequences (bow-wow-wow) alternate between mostly identical open-c... morelose jaw movements. In humans, this alternation is a universal speaking mode. The dog barking in this video is also making other coupled rhythmic communication signals – note the tail wagging and ear movements (Image: Wikimedia Commons)

There is no animal equivalent to the combined movements that make up our vocal cycle, although other animals make all of these movements. Most mammals call with their mouths open, using coupled Central Pattern Generators that link the out-breath with sound production (phonation) in the larynx. A few animals such as dogs make occasional calls using a partial open-close oscillating jaw movement, although they typically repeat the same sound (bow-wow-wow). We coordinate the circuits for breath control and phonation with another set of pattern generators that operate the rhythmic movements of our jaw, lips and tongue.

These movements have other functions, as in the suckling of newborns. This ability defines us as mammals. But these movements may also be linked to talking. James Lund and co-workers suggest that the human Central Pattern Generators controlling chewing, licking and sucking also participate in speech.

Peter MacNeilage goes on to suggest that the rhythmic repetitive movements used for eating have been coordinated in mammals since the clade arose some 200 Ma ago, and that they lie at the root of our articulations skills. As we speak, our tongue moves up and down, and front to back in the mouth. Chewing also includes sideways motions of the tongue and jaw. These are not included in our vocal movements, indeed they would leave us more prone to biting our tongue. MacNeilage proposes that coupling our pre-existing capability for making vocal calls with this subset of movements used during eating, gave our more immediate ancestors the capacity to articulate simple ‘proto-syllables’.

Baby chimpanzee (Pan troglodytes) at Beijing zoo. Chimpanzees can be taught to recognise several hundred human words, but none have reproduced these verbally, even if raised in a human environment. Chimpanzees make occa... moresional calls in a series (something like syllables in speech), although repetition of one sound is more usual. In the variable-sound calls, the arrangement of components do not seem to be significant. In contrast, we can say e.g. ‘cat, ‘tack’ and ‘act, using varied sequences of the same sounds to infer different information (Image: Wikimedia Commons)

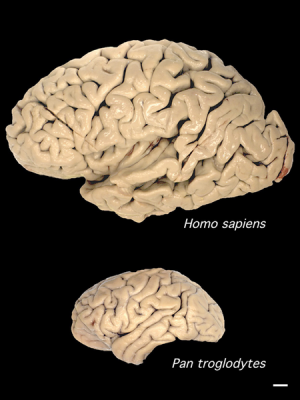

Does this then apply to our nearest relatives, the chimps? Philip Lieberman has shown that their vocal apparatus is anatomically suitable to produce a range of human syllables, and yet they do not speak. This suggests that they cannot coordinate their Central Pattern Generator signals for vocal sound production and chewing. The reason for this appears to be linked to differences in cognition. Recent work comparing the neurology underpinning chimp calls and human word-based speech has found that the neural circuits driving these respective vocalisations originate from different parts of the brain.

However chimps and other primates do make rhythmical face and jaw movements, producing lipsmacks, tongue smacks, teeth-chatters and other facial gestures. Lipsmacks involve moving the jaw without the teeth coming together, as in human speech, and are often made by juveniles as they approach their mother to suckle. Primate grooming is a 1:1 interaction using touch, eye contact and other positive one-to-one interactions which often involve ‘taking turns’.

We are able to precisely control the pitch, and resonance as well as the rhythm of speech and articulation of our sounds thanks to our flexible and dextrous tongue. The tongues of new-born babies lie flat in their mouths. The permanent descent of our larynx occurs early during our development; this raises the tongue in the mouth, allowing it to move freely.

Male Koala (Phascolarctos cinereus) at Billabong Koala and Aussie Wildlife Park, Port Macquarie, New South Wales, Australia. Almost all mammals lower their larynx to vocalise. Koalas are unusual; along with lions, deer ... moreand humans they have a permanently descended larynx. In these non-human animals this results in a dramatically resonant deep male mating call. Unlike us, these other animals have pronounced differences in body form between males and females (Image: Wikimedia Commons). Watch a video of male koala vocalisation here.

The ability to learn and reproduce complex sounds in the form of song has arisen independently in whales, humans, and at least three times in birds. Only male songbirds make complex learned song calls; they sing to attract mates. In contrast our language ability is gender-balanced. No other primates have elaborated male mating calls. However the second descent of the larynx in boys during puberty suggests that sexual selection may have refined our control of the resonant qualities of our vocal tract.

How could natural selection have favoured our ancestors’ ability to produce these sounds?

Our vocal flexibility comes at a price; an increased individual risk of choking. Our ancestors’ ability to form ‘proto-words’ and ‘proto-song’ must have given their tribal group a selective advantage that outweighed this risk.

Strong bonds bring advantages to social groups including relative safety in numbers from predators, collective understanding of their environment, higher levels of parental care from the extended family (resulting in better juvenile survival), and coordination of hunting and foraging. Primates use grooming, which combines touch with emotionally-coded facial and vocal sound gestures, to make and maintain social bonds.

Chimpanzees (Pan trogoldytes) grooming at Gombe Stream National Park, Tanzania.Grooming in primates cleans the outer body, decreases stress, allows acceptance into the group and reveals the social hierarchy. Chimps and ... moreother higher primates may utter pleasure-indicating sounds during grooming, particularly if being attended to by a higher status member of the tribe. Chimpanzee tribes range from 15 to 120 individuals. In their “fission-fusion society” all members know each other but feed, travel, and sleep in smaller groups of six or less. The membership of these small groups changes frequently (Image: Wikimedia Commons)

Robin Dunbar points out that the extent of primate grooming time can be predicted from their combined neocortex (‘thinking’ brain) size and social group size. It is thought our large-brained hominid ancestors lived in tribes of up to around 150 individuals, which would mean that they would need to spend up to 40% of their time manually grooming each other to maintain social bonds. Speech may have provided a time-saving alternative; a means of ‘vocally grooming’ others. A speaker may have been able to connect to and bond simultaneously with multiple individuals.

Human babies making new or unusual sounds quickly receive their parents’ attention. Ulrike Griebel and Kimbrough Oller suggest that our hominin ancestors’ babies may have produced sounds that provoked more parental attention and so more effective bonding. Their better survival would select for babies with vocal variation and flexibility.

A large pod of over 80 dusky dolphins (Lagenorhynchus obscurus) swimming together in South Bay, Kaikoura, New Zealand. Although there is some physical touching amongst individuals in the pod, dolphins and killer whales ... moreuse vocal grooming to coordinate with each other when hunting, during migrations, and ‘play’ (Image: Wikimedia Commons)

Involuntary pleasure sounds encourage continued social interactions between primates of all ages. Perhaps our hominid ancestors learned to make diverse and pleasurable sounds as babies, and as a result were better equipped as adults to vocally groom their extended families.

Conclusions

- Fine motor control of the tongue, lips and jaw allow us to produce a huge repertoire of diverse sounds. This control likely comes from combining the movements used for eating with the production of vocal sounds.

- Our ancestors may have evolved this capability at a time when their social group size increased, with a more time-efficient form of grooming required if the cohesion of the ‘tribe’ was to be maintained.

- Selection for diverse and flexible speech sounds may have begun with babies using suckling and other sounds to gain more parental attention. These individuals would be more effective as adults at ‘vocally grooming’ their wider social group.

- Our ability to produce speech sounds is balanced between genders, suggesting that group selection rather than sexual selection has driven the evolution of this ability.

Text copyright © 2015 Mags Leighton. All rights reserved.

References

Arbib, M.A. (2005) From monkey-like action recognition to human language: an evolutionary framework for neurolinguistics. Behavioral and Brain Sciences 28, 105-124.

Bouchet, H. et al. (2013) Social complexity parallels vocal complexity: a comparison of three non-human primate species. Frontiers in Psychology 4, article 390.

Charlton, B. et al. (2011) Perception of male caller identity in koalas (Phascolarctos cinereus): acoustic analysis and playback experiments. PLoS ONE 6, e20329.

Fitch, W.T. (2010) The Evolution of Language. Cambridge University Press.

Green, S. and Marler, P. (1979) The analysis of animal communication. In Social Behavior and Communication (P. Marler, ed.) pp. 73-158. Springer.

Hauser, M. et al. (2002) The faculty of language: what is it, who has it, and how did it evolve? Science298, 1569-1579.

Kimbrough Oller, D. and Griebel, U. (eds) (2008) Evolution of Communicative Flexibility: Complexity, Creativity, and Adaptability in Human and Animal Communication. Vienna Series in Theoretical Biology, MIT Press.

Lehman, J., Korstjens, A.H. and Dunbar, R.I.M. (2007) Group size, grooming and social cohesion in primates. Animal Behaviour 74, 1617-1629.

Lieberman, P. (2006) Toward an Evolutionary Biology of Language. Harvard University Press.

Lund, J.P. and Kolta, A. (2006) Brainstem circuits that control mastication: do they have anything to say during speech? Journal of Communication Disorders 39, 381-390.

MacNeilage, P. (2008) The Origin of Speech. Oxford University Press.

MacNeilage, P.F. and Davis, B.L. (2000) On the origin of internal structure of word forms. Science288, 527-531.

Parr. L.A. et al. (2007) Classifying chimpanzee facial expressions using muscle action. Emotion 7, 172-181.

Pearson, K.G. (2000) Neural adaptation in the generation of rhythmic behaviour. Annual Review of Physiology 62, 723-753.

Redican, W.K. and Rosenblum, L.A. (1975) Facial expressions in nonhuman primates. Stanford Research Institute.

Titze, I. R. (1989) Physiologic and acoustic differences between male and female voices. The Journal of the Acoustical Society of America 85, 1699-1707.

Traxler, M.J. et al. (2012) What's special about human language? The contents of the "Narrow Language Faculty" revisited. Linguistics and Language Compass 6, 611-621.

van Wassenhove, V. (2013) Speech through ears and eyes: interfacing the senses with the supramodal brain. Frontiers in Psychology 4, article 388.

Weusthoff, S. et al. (2013) The siren song of vocal fundamental frequency for romantic relationships. Frontiers in Psychology 4, article 439.

Willemet, R. (2013) Reconsidering the evolution of brain, cognition, and behaviour in birds and mammals. Frontiers in Psychology, 4, article 396.

What’s so different about human speech?

Upon leaving the island, Odysseus is warned that storms lie ahead. His route home to Ithaca passes the sirens; monsters whose beautiful, haunting voices lure sailors to their deaths.

He sets a course and explains his intention to the crew. At his command they fasten him to the mast, seal their ears with wax, and prepare for their encounter.

They reach treacherous waters. The siren song reaches into Odysseus’ mind, resonating with his deepest longings. The storm rages within. He struggles, but his bindings, the result of his clear intention, secure him tightly to the mast.

Forced to stand still and listen, he finds that he starts to hear the voices for what they really are; the empty fears of his own soul. He relinquishes his fight and hears the voice at his own still centre. The storm calms.

The crew notice that he has returned to his senses. They cut him loose.

He is indeed a wise and worthy captain.

This ancient footprint, first made in soft mud, is an index which shows us the passing of an three-toed Theropod dinosaur. Denver, Colorado (Image: Wikimedia Commons)

Speaking involves transmitting and interpreting intentional signs, some of which are also used in the instinctual communications of animals. These signals are of three kinds: 1. An index physically shows the presence of something, e.g. wolves tracking their prey by scent.

2. An ‘icon’ resembles the thing it stands for, like a photograph or a painting. Dolphins, apes and elephants recognise their own reflection; we assume that they interpret this two-dimensional image as representing their three-dimensional physical selves.

3. A symbol associates an unrelated form with a meaning. Our words are symbols, linking an idea with unique sound-and-movement sequences. They do not resemble the things they represent.

The ‘Union Jack’, a symbol of Great Britain since the union of Great Britain and Ireland in 1801. It is made up of three other flag symbols; the Cross of St George for England (insert, top), St Andrew’s Saltaire f... moreor Scotland (centre), and for Northern Ireland, St Patricks Saltire (below) (Image: Wikimedia Commons)

Symbolism is almost unknown amongst animals, with a few rare exceptions. A stereotypical form of symbol is the ‘waggle dance’ of honeybees.

Although chimpanzees can be taught to use some sign gestures, they do not naturally communicate using symbols. In contrast, we use our symbolic language intentionally.

Our uses of speech are unique. We revisit our memories, order our thoughts and make future plans. With a destination in mind, we can listen for our ‘inner voice’, map out our route, take a stand against the storm of inner and outer distractions, and find our way home.

How is our speech unique?

Normal speech is already multi-channel; our words are accompanied by the musicality of our speaking, and our facial expressions and other physical gestures transmit many layers and levels of complex meaning. Writing is ... moreanother mode of communicating our language. Social media transmits our language into virtual worlds. The online social networking service Facebook commissioned ‘Facebook Man’ to commemorate their 150 millionth user(Image: Wikimedia Commons)

Aspects of our language ability are found in other animals, but the way we have combined and developed these traits is uniquely human.

1. We use any available channel.

Most human languages use vocal speech. Under circumstances where speaking is not possible, we find other ways, e.g. sign languages and Morse code.

2. We build our words from parts that gain meaning as they are combined

Most of the syllables we use to build words lack meaning on their own. Combining them together (as in English) or adding tonal shifts (as in Chinese) creates words.

3. We code our words with meanings, making them into symbols

The chimpanzee (Pan troglodytes) known as Washoe (1965-2007) was the first non-human animal to be taught American sign language. She lived from birth with a human family, and was taught around 350 sign words. It was rep... moreorted that upon seeing a swan, Washoe signed “water” and “bird”. Chimpanzees are capable of learning simple symbols. However Washoe did not make the transition to combining these symbols together into new meanings (Image: Wikimedia commons)

Symbols are ‘displaced’, i.e. they do not need to resemble the thing they represent. Our words symbolise ideas, experiences and things.

4. We combine these symbols to make new meanings.

We build words into phrases and stories, use these to revisit and share our memories, combine them into new forms, and communicate this information to others in various ways. Combining different symbols brings us a new understanding, which changes how we respond.

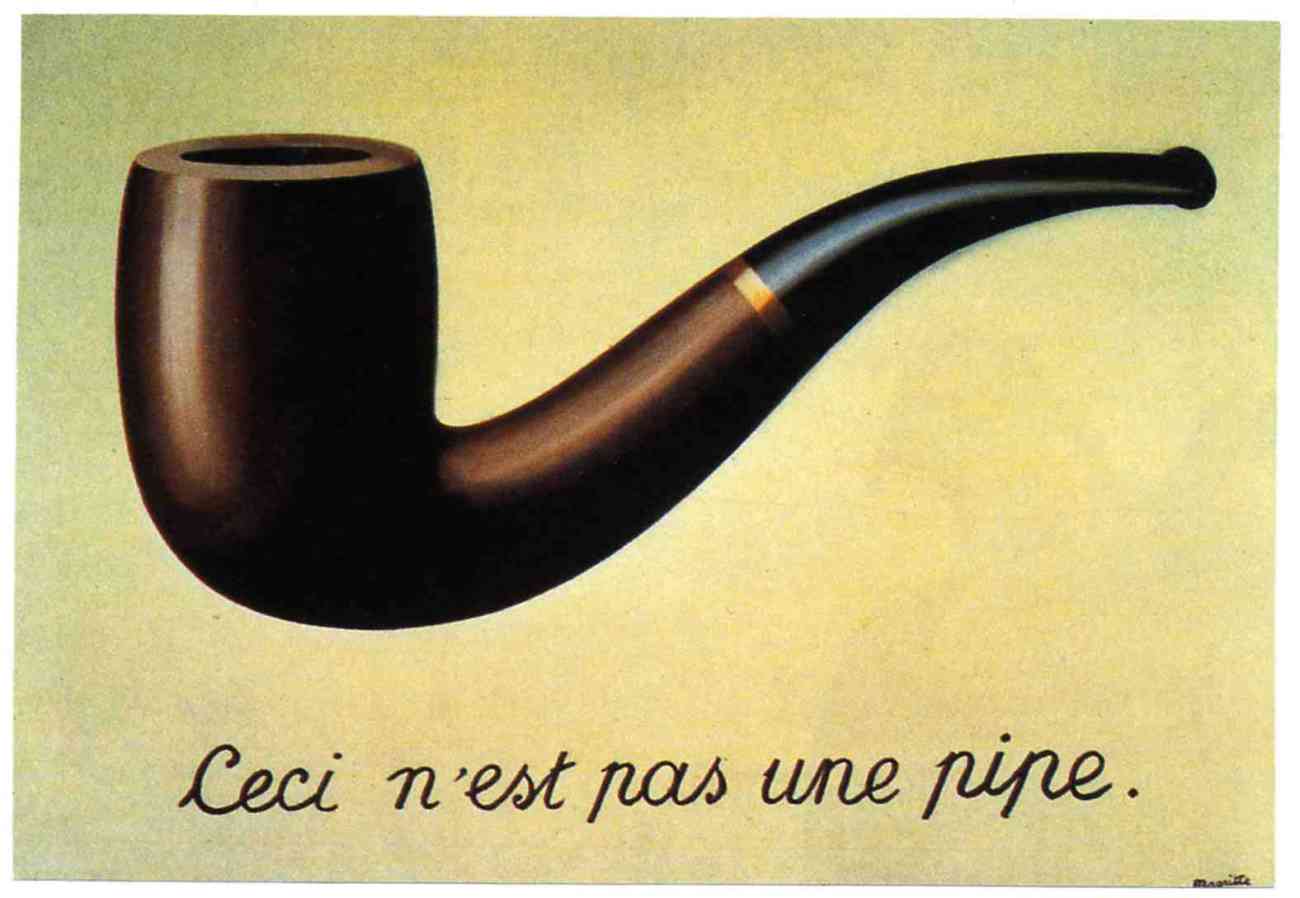

Look at this painting. As you do, consider what feelings it provokes.

‘Wheatfield with crows’ by Vincent Van Gogh, 1890. (Image; wikimedia commons)

It is, of course, by Vincent Van Gogh. As you may know, his choices of colour and subject material were a personal symbolic code. He often used vibrant yellows, considering this colour to represent happiness.

His doctor noted that during his many attacks of epilepsy, anxiety and depression, Van Gogh tried to poison himself by swallowing paint and other substances.

As a consequence, he may have ingested significant amounts of toxic ‘chrome yellow’, which contains lead(II) chromate (PbCrO4).

Now consider this statement.

“This is the last picture that Van Gogh painted before he killed himself” (John Berger 1972, p28)

Look again at the picture.

What do you feel this time?

‘Wheatfield with crows’ by Vincent Van Gogh, 1890. (Image; wikimedia commons)

Certainly our response has changed, though it is difficult to articulate precisely what is different. The image now seems to illustrate this sentence. Its symbolic content has altered for us. This example shows how combining two types of information –an image and text- can change the meaning it symbolises.

Some animals can be trained to recognise simple symbols. The psychologist Irene Pepperberg taught her African Grey parrot ‘Alex’ to count; he learned to use numbers as symbols, and could identify quantities of up to 6 items.

An African Grey Parrot (Psittacus erithacus). Professor Irene Pepperberg’s parrot, Alex, learned basic grammar, could identify objects by name, and could count (Image: Wikimedia Commons)

5. The order in which we combine symbols defines their meaning

We put word symbols together into phrases, sentences, descriptions, sayings, stories, poems, documents, manuals, plays, oaths, promises, parodies, plays, pantomimes….

The ordering of words follow rules (grammar and syntax). Animals such as dogs and dolphins show some form of syntactical ability, but there is no evidence that they are on the verge of using what we understand as language. The order of words shows us their relationship, allowing us to understand how they are interacting. We change the order of our words and phrases to change the meaning we wish to communicate.

For instance; this makes sense.

‘Jane asked Simon to give these flowers to you.’

This doesn’t quite fit our normal understanding of reality…

‘These flowers asked Simon to give Jane to you.’

This works, but the meaning has changed.

‘Simon asked you to give these flowers to Jane’

However grammar is not enough . The words in combination need to ‘make sense’ for us to understand the meaning the speaker wishes to communicate.

What does this enable us to say?

‘The treachery of images’ by Belgian surrealist painter, Rene Magritte (1928-9).Much of Magritte’s work explored the combination of words and images, and the way that this challenges the meaning that we understand... more from the components on their own. This combination of words and image have been deliberately chosen so that they contradict each other.What the artist says is true. However, it isn’t a pipe! It is a two-dimensional representation of a pipe (Image: Wikimedia Commons)

When we make new combinations of words, or add words to a visual signal such as a gesture, we create a new meaning.

We can add adjectives to a description, add qualifiers, combine phrases into a sentence, and make statements one after the other so that our listener associates these ideas. This process is known as ‘recursion’, a linguistic term borrowed from mathematics.

Our ideas about time vary between cultures, but we all mentally ‘time travel’ by revisiting our memories. For instance, the scent of something can evoke a memory that transports us back into an earlier event; suddenly we experience again the emotions and sensations we felt at that time. Putting our current selves into the past memory, or imagining a future scenario and inserting ourselves into that story, is a form of recursion.

Memory allows us to link speaking and listening with the meanings of our words. Our language is well structured to easily express recursive ideas. This shows us that our thinking uses recursion.

Why are we able to do this?

An illustration by Randolph Caldecott (1887) for ‘the House that Jack Built’. This traditional British nursery rhyme uses recursion to build up a cumulative tale. The sentence is expanded by adding to one end (end r... moreecursion). Each addition adds an increasingly emphatic meaning to the final item of the sentence (i.e. the house that Jack built) (Image: Wikimedia Commons). One final version of combined phrases ends like this;This is the horse and the hound and the hornThat belonged to the farmer sowing his cornThat kept the cock that crowed in the mornThat woke the priest all shaven and shornThat married the man all tattered and tornThat kissed the maiden all forlornThat milked the cow with the crumpled hornThat tossed the dog that worried the catThat killed the rat that ate the maltThat lay in the house that Jack built.

Our thinking capacity, through which we learn and remember, means that we can copy and learn to use language. Although some brain regions appear specialised for roles in memory and language, our ‘language function’ uses our entire brain, and cannot be dissociated from our minds.

Our ‘language brain’ includes the ‘basal ganglia’; these are neurons which connect the outer cortex and thalamus with lower brain regions.

We need this connectedness to coordinate movements in our fingers, to understand the relationships between words that are inferred by their order in our phrases, and to solve abstracted (theoretical) problems. This network interacts with ‘mirror neurons’ which allow us to relate to and decode the posture, speech and emotional cues of others.

The basal ganglia that influence our speech also regulate the muscles controlling our posture. Standing is therefore more than just balancing on two legs; it is a whole body activity and requires much finer muscle control than walking on all fours. It also frees the hands, which allows us to manipulate tools. Lieberman suggests that it is the fine motor control required to maintain our upright posture which pre-adapted our ancestors for manipulating hand tools as well as the tongue, lips and other structures that make speech possible. This upright posture is linked with a remodelling of our breathing apparatus, giving us more control over our larynx.

Philip Lieberman’s work with people suffering from Parkinson’s’ disease suggests that it is the ability to remember that makes speaking possible. Parkinson’s patients have degraded nerve circuits in their basal ganglia, so these patients have short term memory problems and difficulties with balancing and making precise finger movements. They also struggle with understanding and using metaphors and longer word sequences. This suggests that when we speak we are using the circuitry for sorting and remembering movement sequences, irrespective of whether these are producing words or actions.

Our posture has remodelled the evolution of our entire physiology from breathing to childbirth. It frees the hands, allowing us to perform delicate and precise sequences of tasks. Selection for the ability to precisely ... moresequence our manual motor skills may have provided our ancestors the means to better sequence their thoughts (Image: Wikimedia Commons)

The basal ganglia that influence our speech also regulate the muscles controlling our posture. Standing is therefore more than just balancing on two legs; it is a whole body activity and requires finer muscle control that walking on all fours. It also frees the hands, which allows us to manipulate tools.

Lieberman suggests that it is the fine motor control required to maintain our upright posture which pre-adapted our ancestors for manipulating hand tools as well as the tongue, lips and other structures that make speech possible. This upright posture is linked with a remodelling of our breathing apparatus, giving us more control over our larynx.

The nerve networks that control our limbs and voices are linked across all vertebrates. Our basic ‘walking instinct’ initially activates Central Pattern Generator circuits driving movement in all four limbs. These are the same neural outputs that control our lips, tongue and throat.

Conclusions: What does this say about our language?

Captain Odysseus stands upright against the mast. This posture is distinct to our species, and has many implications for our speech, language and other actions

(Image: Wikimedia commons)

- Our hominin ancestors evolved to use symbolic words and stories as a code to store and share memories, develop new skills and ideas, and coordinate their intentions and actions with their tribe.

- When we revisit our memories or ‘reword’ our experiences into new sequences, we remodel the past, and project our thoughts into the future.

- The control we have over our vocal sounds is linked with our neural circuits for movement. The ability to balance ideas and manipulate our tongues is linked to our ability to stand upright, balance on two feet and manipulate tools with our hands.

- Language, then, is a cultural tool that allows us to order our thoughts, go beyond our instincts, share our intentions, and choose our own story.

Text copyright © 2015 Mags Leighton. All rights reserved.

References

Berger J (1972) ‘Ways of Seeing’ Penguin books Ltd, London, UK

BickertonD and Szathmáry E (2011) ‘Confrontational scavenging as a possible source for language and cooperation’ BMC Evolutionary Biology 11:261 doi:10.1186/1471-2148-11-261

Corballis MC (2007) ‘The uniqueness of human recursive thinking’ American Scientist Volume 95 (3), May 2007, Pages 240-248

Corballis, M.C.(2007) ‘Recursion, language, and starlings’ Cognitive Science 31(4) 697-704

Everett D (2008) ‘Don’t sleep, there are snakes: Life and language in the Amazonian jungle’ Pantheon Books, New York, NY (2008)

Everett, D (2012) ‘Language: the cultural tool’ Profile Books Ltd, London, UK

Gentner TQ et al (2006) ‘Recursive syntactic pattern learning by song birds’ Nature, 440;1204–1207

Upon reflection; what can we really see in mirror neurons?

“Mirror, mirror on the wall,

who is the fairest of them all?”

“Fair as pretty, right or true;

what means this word ‘fair’ to you?

Fair in manner, moods and ways,

fair as beauty ‘neath a gaze…

Meaning is a given thing.

I cannot my opinion bring

to validate your plain reflection!

You must make your own inspection.”

Mirrors shift our perspective, enabling us to see ourselves directly, and reflect ideas back to us symbolically. But how do we really see ourselves?

Quite recently, neuroscientists discovered a new type of nerve cell in the brains of macaques which form a network across the primary motor cortex, the brain region controlling body movements. These nerves are intriguing; they become active not only when the monkey makes purposeful movements such as grasping food, but also when watching others do the same. As a result, these cells were named ‘mirror neurons’.

In monkeys and other non-human primates, these nerves fire only in response to movements with an obvious ‘goal’ such as grabbing food. In contrast, our mirror network is active when we observe any human movement, whether it is purposeful or not. Our brains ‘mirror’ the actions of ourselves and others’ actions, from speaking to dancing.

However it is to interpret what function these cells are performing. Different researchers suggest that mirror neurons enable us to:

– assign meaning to actions;

– copy and store information in our short term memory (allowing us to learn gestures including speech);

– read other people’s emotions (empathy);

– be aware of ourselves relative to others (giving us a ‘theory of mind’, i.e. we have a mind, and the contents of other people’s minds are similar to our own).

Whilst these opinions are not necessarily exclusive, they do seem to reflect the different priorities of these experts. Their varied interpretations highlight how difficult it is to be aware of how our beliefs and assumptions affect what our observations can and cannot tell us.

What we can say, is that the behaviour of these nerve cells shows that our mirror neuron responses are very different from those of our closest relatives, the primates.

What do we know about mirror neurons from animals?

A tribe of stump tail macaques (Macaca arctoides) watch their alpha male eating.When these macaques observe a meaningful gesture, such as grabbing for food, this triggers a shift in the electrical status of the same mot... moreor neurons in their brain as the observed animal is using to perform the action. This ‘mirroring’ is found in other primates, including humans.(Image: Wikimedia commons)

Researchers at the University of Parma first discovered mirror neurons in an area of the macaque brain which is equivalent to Broca’s area in humans. This brain region assembles actions into ordered sequences, e.g. operating a tool or arranging our words into a phrase. Later studies show these neurons connect right across the monkey motor cortex, and respond to many intentional movements including facial gestures.

The macaque mirror system is activated when they watch other monkeys seize and crack open some nuts, grab some nuts for themselves, or even if they just hear the sound of this happening. Their neurons make no responses to ‘pantomime’ (i.e. a grabbing action made without food present), casual movements, or vocal calls.

Song-learning birds also have mirror-like neurons in the motor control areas in their brains. Male swamp sparrows’ mirror network becomes active when they hear and repeat their mating call. Their complex song is learned by imitating other calls, suggesting a possible role for mirror neurons in learning. This is tantalising, as we do not yet know the extent to which mirror neurons are present in other animals.

![Our visual cortex receives information from the eyes, which is then relayed around the mirror network and mapped onto the motor output to the muscles. First, [purple] the upper temporal cortex (1) receives visual information and assembles a visual description which is sent to parietal mirror neurons (2). These compile an in-body description of the movement, and relay it to the lower frontal cortex (3) which associates the movement with a goal. With these observations complete, inner imitation (red) of the movement is now possible. Information is sent back to the temporal cortex (4) and mapped onto centres in the motor cortex which control body movement (5) (Image: Annotated from Wikimedia Commons)](http://www.42evolution.org/wp-content/uploads/2014/07/G-Mirror-reflex-Carr-etal-2003.jpg)

Our visual cortex receives information from the eyes, which is then relayed around the mirror network to the motor output to the muscles. First, [purple] the upper temporal cortex (1) receives visual information and ass... moreembles a visual description go the action. This is sent to parietal mirror neurons (2) which compile an in-body description of the movement, and relay it to the lower frontal cortex (3) where the movement becomes associated with a goal. With these observations complete, an inner imitation (red) of the movement is now possible. Information is sent back to the temporal cortex (4) and mapped onto centres in the motor cortex which control body movement (5) (Image: Annotated from Wikimedia Commons)

The primate research team at Parma suggest the mirror system’s role is in action recognition, i.e. tagging ‘meaning’ to deliberate and purposeful gestures by activating an ‘in-body’ experience of the observed gesture. The mirror network runs across the sensori-motor cortex of the brain, ‘mapping’ the gesture movement onto the brain areas that would operate the muscles needed to make the same movement.

An alternative interpretation is that mirror neurons allow us to understand the intention of another’s action. However as monkey mirror neurons are not triggered by mimed gestures, the intention of the observed action presumably must be assessed at a higher brain centre before activating the mirror network.

How is the human mirror system different?

Watching another human or animal grabbing some food creates a similar active neural circuit in our mirror network.

The difference is that our nerves are activated by us observing any kind of movement. Unlike monkeys, when we see a mimed movement, we can infer what this gesture means. Even when we stay still we cannot avoid communicating; the emotional content of our posture is readable by others. In particular we readily imitate other’s facial expressions.

As we return a smile, our face ‘gestures’. Marco Iacoboni and co-workers have shown that as this happens, our mirror system activates along with our insula and amygdala. This shows that our mirror neurons connect with the limbic system which handles our emotional responses and memories. This suggests that emotion (empathy) is part of our reading of others’ actions. As we see someone smile and smile back, we feel what they feel.

Spoken words deliver more articulated information than can be resolved by hearing alone. Our ability to read and copy the movements of others as they speak may be how we really distinguish and understand these sounds. This ‘motor theory of speech perception’ is an old idea. The discovery of mirror-like responses provides physical evidence of our ability to relate to other people’s movements, suggesting a possible mechanism for this hypothesis.

Sound recording traces of the words ‘nutshell’, ‘chew’ and ‘adagio’. Our speech typically produces over 15 phonemes a second. Our vowels and consonants ‘overlap in time’, and blur together into composite... more sounds. This means that simply hearing spoken sounds does not provide us with enough information to distinguish words and syllables. In practice we decode words from this sound stream, along with emotional information transmitted through the tone and timbre of phrases, facial expressions and posture (Images: Wikimedia Commons)

Further studies suggest that these mirror neurons are part of a brain-wide network made of various cell types. Alongside the mirror cells are so-called ‘canonical neurons’, which fire only when we move. In addition, ‘anti-mirrors’ activate only when observing others’ movements. Brain imaging techniques show that frontal and parietal brain regions (beyond the ‘classic’ mirror network) are also active during action imitation. It is not clear how the system operates, but in combination we relate to others’ actions through the same nerve and muscle circuits we would use to make the observed movements. We relate in this way to what is happening in someone else’s mind.

Are mirror neurons our mechanism of language in the brain?

Japanese macaques (Macaca fuscata) grooming in the Jigokudani Hotspring in Nagano Prefecture, Japan. Human and monkey vocal sounds arise from different regions of the brain. Primate calls are mostly involuntary, and exp... moreress emotion. They are processed by inner brain structures, whereas the human speech circuits are located on the outer cortex (Image: Wikimedia Commons)

Mirror-like neurons activate whether we are dancing or speaking. Patients with brain damage that disrupts these circuits have difficulties understanding all types of observed movements, including speech. This suggests that we use our extended mirror network to understand complex social cues.

Our mirror neuron responses to words map onto the same brain circuits as other primates use for gestures. However signals producing our speech and monkey vocal calls arise from different brain areas. This suggests that our speech sounds are coded in the brain not as ‘calls’ but as ‘vocal gestures’. This highlights the possible origins of speaking as a form of ‘vocal grooming’, which socially bonded the tribe.

When we think of or hear words, our mirror network activates the sensory, motor and emotional areas of the brain. We thus embody what we think and say. Michael Corballis and others consider that mirror neurons are part of the means by which we have evolved to understand words and melodic sounds as ‘gestures’.

Woman Grasping Fruit’ by Abraham Brueghel, 1669; Louvre, Paris. The precision control of the grasping gesture she uses to pluck a fig from the fruit bowl is unique to humans. The intensity of her expression implies ma... moreny layers of meaning to what we understand from this picture (Image: Wikimedia Commons)

What is unclear is how we put meaning into these words. Some researchers have suggested that mirror neurons anchor our understanding of a word into sensory information and emotions related to our physical experience of its meaning. This would predict that our ‘grasp’ of the meaning of our experiences arises from our bodily interactions with the world.

Vocal gestures would have provided our ancestors with an expanded repertoire of movements to encode with this embodied understanding. Selection could then have elaborated these gestures to include visual, melodic, rhythmical and emotional information, giving us a route to the symbolic coding of our modern multi-modal speech.

We produce different patterns of mirror neuron activity in relation to different vowel and consonant sounds, as well as to different sound combinations. Also, the same mirror neuron patterns appear when we watch someone moving their hands, feet and mouth, or when we read word phrases that mention these movements.

Wilder Penfield used the ‘homunculus’ or ‘little man’ of European folklore to produce his classic diagram of the body as being mapped onto the brain. A version of this is shown here; mirror neurons map incoming ... moreinformation onto the somatosensory cortex (shown left) and outputs to the muscles from the motor cortex (right). These brain regions lie adjacent to each other (Image: Wikimedia Commons)

We process word sequences in higher brain centres at the same time as lower brain circuits coordinate the movements required for speech production and non-verbal cues. Greg Hicock suggests that our speech function operates by integrating these different levels of thinking into the same multi-modal gesture.

Mirror neurons connecting the brain cortex and limbic system may allow us to synchronously process our understanding of an experience with our emotional responses to it. This allows us to consciously control our behaviour, adapt flexibly to our world, and communicate our understanding to others and to ourselves.

Smoke and mirrors; what do these nerves really show and tell?

People floating in the Dead Sea. Our ability to read emotional information from postures means that we can intuit information about people’s emotional state even when they are not visibly moving (Image: Wikimedia Comm... moreons)

The word ‘mirror’ conjures up strong images in our minds. This choice of name may have influenced what we are looking for in our data on mirror neurons. However they appear crucial for language. This and other evidence suggests that our ability to speak and to read meaning into movement is a property of our whole brain and body.

Single nerve measurements show that the mirror neuron network is a population of individual cells with distinct firing thresholds. Different subsets of these neurons are active when we see similar movements made for different purposes. This suggests that the network responds flexibly to our experience.

Cecilia Heyes’ research shows that our mirror network is a dynamic population of cells, modified by the sensory stimulus our brain receives throughout life. She suggests that these mirror cells are ‘normal’ neurons that have been ‘recruited’ to mirroring, i.e. adopted for a specialised role; to correlate our experience of observing and performing the same action.

This gives us a possible evolutionary route for the appearance of these mirror neurons. Recruitment of brain motor cortex cells to networks used for learning by imitation would create a population of mirror cells. This predicts that;

i. Mirror-like networks will be found in animals which learn complex behaviour patterns, such as whales. (They are already known in songbirds.)

One of the many dogs Ivan Pavlov used in his experiments (possibly Baikal); Pavlov Museum, Ryazan, Russia. Note the saliva catching container and tube surgically implanted in the dog’s muzzle. These dogs were regu... morelarly fed straight after hearing a bell ring. In time, the sound of the bell alone made them salivate in anticipation of food. This experience had trained them to code the bell sound with a symbolic meaning, i.e. to indicate the imminent arrival of food (Image: Wikimedia Commons)

ii. It should be possible to generate a mirror-like network in other animals by training them to associate a stimulus with a meaning, perhaps a symbolic meaning as in Pavlov’s famous ‘conditioned reflex’ experiments with dogs.

Mirror neurons then, show us that something unusual is going on in our brain. They reveal that we use all of our senses to relate physically to movement and emotion in others, and to understand our world. They are part of the system we use to learn and imitate words and actions, communicate through language, and interact with our world as an embodied activity.

However beyond this, we cannot yet see what else they reveal. Until we do, our conclusions about these neurons must remain ‘as dim reflections in a mirror’.

Conclusions

- Monkey mirror neurons relate the observations of intentional movements to a sense of meaning.

- The human mirror network activates in response to all types of human movements, including the largely ‘hidden’ movements of our vocal apparatus when we speak.

Double rainbow. The second rainbow results from a double reflection of sunlight inside the raindrops; the raindrops act like a mirror as well as a prism. The colours of this extra bow are in reverse order to the prima... morery bow, and the unlit sky between the bows is called Alexander’s band, after Alexander of Aphrodisias who first described it. (Image: Wikimedia commons)

- These neurons are a component of the neural network that allows us to internally code meaning into our words, and ‘embody’ our memory of the idea they symbolise.

- The mirror network neurons seem to be part of an expanded empathy mechanism that connects higher and lower brain areas, allowing us to understand our diverse experiences from objects to ideas.

- These cells are recruited into the mechanism by which we learn symbolic associations between items (such as words and their meaning). This shows that it is our thinking process, rather than the cells of our brain, that makes us uniquely human.

Text copyright © 2015 Mags Leighton. All rights reserved.

References

Aboitiz, F & García V R (1997) The evolutionary origin of the language areas in the human brain. A neuroanatomical perspective Brain Research Reviews 25(3);381-396. doi: 10.1016/S0165-0173(97)00053-2

Aboitiz, F et al. (2005) Imitation and memory in language origins’ Neural Networks 18(10);1357.. doi: 10.1016/j.neunet.2005.04.009

Arbib, M (2005) ‘The mirror system hypothesis; how did protolanguage evolve?’ Ch 2 (p21-47 ) in Language Origins - Tallerman M (ed), Oxford University Press, Oxford.

Arbib, M A (2005) ‘From monkey-like action recognition to human language; an evolutionary framework for neurolinguistics.’ The behavioural and Brain Sciences 2: 105-124

Aziz-Zadeh L et al (2006) Congruent Embodied Representations for Visually Presented Actions and Linguistic Phrases Describing Actions’ Current Biology 16(18); 1818-1823

Braadbaart, L (2014) ‘The shared neural basis of empathy and facial imitation accuracy’ NeuroImage 84; 367 – 375

Bradbury J (2005) ‘Molecular Insights into Human Brain Evolution’. PLoS Biology 3/3/2005, e50 doi:10.1371/journal.pbio.003005

Carr, L.et al. (2003) ‘Neural mechanisms of empathy in humans: A relay from neural systems for imitation to limbic areas’ Proceedings of the National Academy of Sciences of the United States of America 100(9); 5497-5502

Catmur, C. et al (2011) ‘Making mirrors: Premotor cortex stimulation enhances mirror and counter-mirror motor facilitation’ (2011) Journal of Cognitive Neuroscience, 23 (9), pp. 2352-2362. doi: 10.1162/jocn.2010.21590 http://www.mitpressjournals.org/doi/pdf/10.1162/jocn.2010.21590

Catmur C et al. (2007) ‘Sensorimotor Learning Configures the Human Mirror System’ Current Biology 17(17) 1527-1531 http://www.sciencedirect.com/science/journal/09609822

Corballis MC (2002) ‘From Hand to Mouth: The Origins of Language’ Princeton University Press, Princeton, NJ, USA

Corballis MC (2003) ‘From mouth to hand: Gesture, speech, and the evolution of right-handedness’ Behavioral and Brain Sciences 26(2); 199-208

Corballis, M (2010) ‘Mirror neurons and the evolution of language’ Brain and Language 112(1); 25-35 doi: 10.1016/j.bandl.2009.02.002

Corballis, M.C. (2012) ‘How language evolved from manual gestures’ Gesture 12(2); PP. 200 – 226

Ferrari PF et al. (2003) ‘Mirror neurons responding to the observation of ingestive and communicative mouth actions in the monkey ventral premotor cortex’ European Journal of Neuroscience 17 (8); 1703–1714

Ferrari, P.F. et al (2006) ‘Neonatal imitation in rhesus macaques’ PLoS Biology, 4 (9), pp. 1501-1508 doi: 10.1371/journal.pbio.0040302 http://biology.plosjournals.org/archive/1545-7885/4/9/pdf/10.1371_journal.pbio.0040302-L.pdf Galantucci, B et al (2006) ‘The motor theory of speech perception reviewed’ Psychon Bull Rev. 2006 June; 13(3): 361–377. PMCID: PMC2746041 NIHMSID: NIHMS136489 http://www.ncbi.nlm.nih.gov/pmc/articles/PMC2746041/

Gallesse V et al (1996) ‘Action recognition in the premotor cortex’ Brain 119:593–609

Gentilucci M & Corballis MC (2006) ‘From manual gesture to speech: A gradual transition’ Neuroscience & Biobehavioral Reviews 30(7); 949-960

Hage, S.R., Jürgens, U. (2006) Localization of a vocal pattern generator in the pontine brainstem of the squirrel monkey European Journal of Neuroscience 23(3); 840 – 844 doi: 10.1111/j.1460-9568.2006.04595.x

Heyes C (2010) ‘Where do mirror neurons come from?’ Neuroscience and behavioural Reviews 34(4); 575-583 http://www.sciencedirect.com/science/article/pii/S0149763409001730

Heyes CM (2001) ‘Causes and consequences of imitation’ Trends in Cognitive Sciences 5; 245–261

Hickok G (2012) ‘Computational neuroanatomy of speech production’ Nature Reviews Neuroscience 13, 135-145 doi:10.1038/nrn3158

Jürgens, U (2003) From mouth to mouth and hand to hand: On language evolution Behavioral and Brain Sciences 26(2); 229-230

Kemmerer D and Gonzalezs-Castillo J (2008) ‘The Two-Level Theory of verb meaning: An approach to integrating the semantics of action with the mirror neuron system’ Brain Lang. 112(1);54-76 doi: 10.1016/j.bandl.2008.09.010. Epub 2008 Nov 8.

Keysers C & Gazzola V (2009) ‘Expanding the mirror: vicarious activity for actions, emotions, and sensations’ Curr Opin Neurobiol. 2009 Dec;19(6):666-71. doi: 10.1016/j.conb.2009.10.006. Epub 2009 Oct 31. Review.

Kohler E et al (2002) Hearing Sounds, Understanding Actions: Action Representation in Mirror Neurons Science297(5582);. 846-848 DOI: 10.1126/science.1070311

Molenberghs P et al (2012) ‘Activation patterns during action observation are modulated by context in mirror system areas’ NeuroImage59(1); 608–615

Molenberghs P et al. (2012) ‘Brain regions with mirror properties: A meta-analysis of 125 human fMRI studies’ Neuroscience & Biobehavioral Reviews 36(1); 341-349 http://dx.doi.org/10.1016/j.neubiorev.2011.07.004

Molenberghs P, et al. (2009) Is the mirror neuron system involved in imitation? A short review and meta-analysis. Neurosci Biobehav Rev. 2009 Jul;33(7):975-80. doi: 10.1016/j.neubiorev.2009.03.010. Epub 2009 Apr 1.

Mukamel R et al (2010) Single-neuron responses in humans during execution and observation of actions Current biology 20(8); 750–756 http://dx.doi.org/10.1016/j.cub.2010.02.045

Pohl, A et al. (2013) ‘Positive Facial Affect – An fMRI Study on the Involvement of Insula and Amygdala’ PLoS One 8(8): e69886. doi:10.1371/journal.pone.0069886 PMCID: PMC3749202

Prather, J. F., Peters, S., Nowicki, S., Mooney, R. (2008). "Precise auditory-vocal mirroring in neurons for learned vocal communication." Nature 451: 305-310.

Pulvermüller F (2005) ‘Brain mechansims linking language and action’ Nature Reviews Neuroscience 6:576-582

Pulvermüller F et al (2005) ‘Brain signatures of meaning access in action wordrecognition’ Journal of Cognitive Neuroscience 17;884-892

Pulvermüller F et al (2006) ‘Motor cortex maps articulatory features of speech sounds’ PNAS 103 (20); 7865–7870 doi: 10.1073/pnas.0509989103

Rizzolatti G & Luppino G (2001) ‘The cortical motor system’ Neuron 31:889–901

Rizzolatti G (1996) ‘Premotor cortex and the recognition of motor actions. Cogn. Brain Res. 3:131–41

Pavlov IP (1927) ‘Conditioned Reflexes; an investigation of the physiological activity of the cerebral cortex’ OUP, London (republished 2003 as ‘Conditioned Reflexes’ Dover Publications Ltd, NY, USA)

Rizzolatti G, et al (1996) ‘Localization of grasp representation in humans by PET: 1. Observation versus execution. Exp. Brain Res. 111:246–52

Rizzolatti G, Fogassi L, Gallese V. 2002. ‘Motor and cognitive functions of the ventral premotor Cortex’ Curr. Opin. Neurobiol. 12:149–54

Rizzolatti G. et al (2001) ‘Neurophysiological mechanisms underlying the understanding and imitation of action’ Nature Reviews Neuroscience,2(9);661-670. doi: 10.1038/35090060

Umilta et al (2001) ‘I know what you are doing. A neurophysiological study’ Neuron 31:155-165